Executive Summary

Two high vulnerabilities affecting Chainlit, an open-source AI application framework used to build conversational AI and enterprise chatbots, can allow malicious actors to leak sensitive data and potentially enable broader cloud compromise

- CVE-2026-22218 (CVSS score: 7.1): Enables arbitrary file read via customized element payloads.

- CVE-2026-22219 (CVSS score: 8.3): Enables server-side request forgery (SSRF), allowing internal network and cloud metadata access.

Both flaws impact versions prior to Chainlit 2.9.4 and can be triggered without user interaction under common configurations, increasing risk for internet-exposed AI services. Patched releases are available; urgent updates are advised.

Background: What is Chainlit?

Chainlit is a Python-based framework for developing production-ready conversational AI applications. It’s widely integrated with:

- LangChain

- OpenAI and other LLM providers

- SQLAlchemy backends

- Cloud workflows and authentication modules

With hundreds of thousands of monthly downloads, its widespread adoption has established Chainlit as a core component for both internal and external AI-powered services in enterprise environments.

Vulnerability Details

CVE-2026-22218: Arbitrary File Read

- Location: /project/element update flow

- Vulnerability: Improper validation of user-controlled element paths allows an authenticated client to coerce Chainlit into copying arbitrary files into their session, then retrieving them.

- Impact: Confidential files, environment variables, such as cloud API credentials and service keys, internal configuration and even authentication token material may be disclosed.

- Severity: High (CVSS 7.1).

CVE-2026-22219: SSRF through SQLAlchemy Data Layer

- Location: SQLAlchemy backend used for element storage.

- Vulnerability: Malicious payloads cause outbound HTTP requests from the Chainlit server to arbitrary internal or cloud metadata endpoints.

- Impact: Access to internal services, IAM token retrieval, lateral movement, and metadata service abuse in cloud environments.

- Severity: Critical (CVSS 8.3).

Real-World Risk & Exploitability

- Exploit Prerequisites: Low or no interaction required; misconfigured or internet-exposed Chainlit endpoints are sufficient in many deployments.

- Attack Vector: Network-accessible services using Chainlit as backend.

- Data at Risk:

- Cloud credentials and secrets, such as AWS keys and token signers.

- Internal network addresses and APIs.

- Application source files and logs.

- Chat history, LLM prompts/responses, if integrated.

- In-the-Wild Evidence: No confirmed widespread exploitation has been publicly reported yet; however, internet-exposed AI services significantly increase the attack surface.

Why This Matters Beyond Chainlit

While these vulnerabilities impact Chainlit specifically, they reflect a broader pattern we’ve observed across modern AI application stacks.

Frameworks designed to accelerate LLM development often combine traditional web components such as file handling, HTTP requests and ORM layers with new execution paths introduced by AI workflows. This expands the attack surface while blurring trust boundaries between user input, application logic and backend infrastructure.

We’ve previously explored similar risks in AI frameworks and orchestration layers, where familiar vulnerabilities like SSRF, file access and injection resurface in new contexts, often with downstream impact due to access to cloud credentials, internal services and sensitive data flows.

Remediation

- Upgrade Immediately: Update all Chainlit installations to version 2.9.4 or later.

- Temporary Mitigations:

- Restrict access to Chainlit service endpoints, such as WAF, internal-only network ACLs.

- Review element upload/update flows for strict path/URL sanitization.

- Audit cloud metadata access permissions.

- Monitor: Watch for unusual outbound requests or file access patterns.

Takeaway

The discovery of ChainLeak highlights the enduring presence of conventional web vulnerabilities, such as arbitrary file read and Server-Side Request Forgery (SSRF), within modern AI frameworks. These flaws enable attackers to bypass AI layers and gain access to sensitive infrastructure.

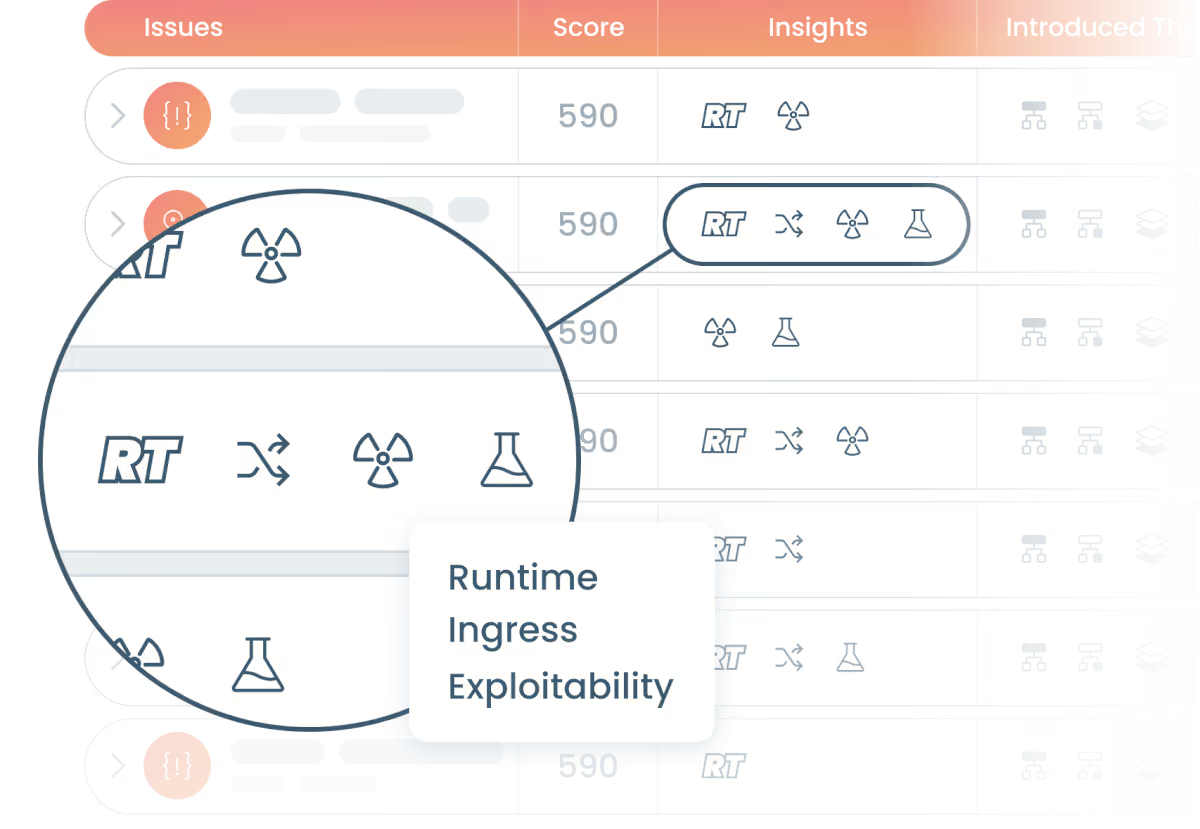

Organizations utilizing Chainlit in production must immediately prioritize patching these issues. Security teams face the critical task of not only identifying vulnerable AI components but also determining which ones are actually accessible, executed, and exposed within their live production environments.

References

- CSO Online. (2026 January 20). Flaws in Chainlit AI Dev Framework Expose Servers to Compromise. https://www.csoonline.com/article/4119469/flaws-in-chainlit-ai-dev-framework-expose-servers-to-compromise.html

- Infosecurity Magazine. (2026 January 21). Chainlit Security Flaws Expose AI Applications. https://www.infosecurity-magazine.com/news/chainlit-security-flaws-ai-apps

- NVD. (2026 January 20). CVE-2026-22218 Detail. https://nvd.nist.gov/vuln/detail/CVE-2026-22218

- NVD. (2026 January 20). CVE-2026-22219 Detail. https://nvd.nist.gov/vuln/detail/CVE-2026-22219

- SecurityWeek. (2026 January 20). Chainlit Vulnerabilities May Leak Sensitive Information. https://www.securityweek.com/chainlit-vulnerabilities-may-leak-sensitive-information/

- The Register. (2026 January 20). AI framework flaws put enterprise clouds at risk. https://www.theregister.com/2026/01/20/ai_framework_flaws_enterprise_clouds/

- Kodem Security. (2025 September 5). LangChain, LangGraph, CrewAI: Security Issues in AI Agent Frameworks for JavaScript and TypeScript. https://www.kodemsecurity.com/resources/langchain-langgraph-crewai-security-issues-in-ai-agent-frameworks-for-javascript-and-typescript

- Kodem Security. (2025 September 5). Security Risks Across the AI Application Stack: A Researcher’s Guide. https://www.kodemsecurity.com/resources/security-risks-across-the-ai-application-stack-a-researchers-guide

- Zafran Security Research. (2026 January 20). ChainLeak: Critical AI Framework Vulnerabilities Expose Data, Enable Cloud Takeover. https://www.zafran.io/resources/chainleak-critical-ai-framework-vulnerabilities-expose-data-enable-cloud-takeover

Related blogs

A Primer on Runtime Intelligence

See how Kodem's cutting-edge sensor technology revolutionizes application monitoring at the kernel level.

Platform Overview Video

Watch our short platform overview video to see how Kodem discovers real security risks in your code at runtime.

The State of the Application Security Workflow

This report aims to equip readers with actionable insights that can help future-proof their security programs. Kodem, the publisher of this report, purpose built a platform that bridges these gaps by unifying shift-left strategies with runtime monitoring and protection.

.avif)

Get real-time insights across the full stack…code, containers, OS, and memory

Watch how Kodem’s runtime security platform detects and blocks attacks before they cause damage. No guesswork. Just precise, automated protection.