Turn the Lights On: Why AI Governance Cannot Rely on Traffic Inspection Alone

Introduction: The Next Visibility Problem in AI Security

The first generation of enterprise AI governance and security controls has emerged rapidly. As organizations moved to adopt generative AI tools, security teams responded by deploying controls designed to monitor how applications interact with large language models. AI gateways, prompt-inspection systems, model firewalls, and API security platforms now inspect traffic between applications and model endpoints in order to detect policy violations, data leakage, and prompt-injection attempts.

This architecture represents the current standard of care for AI security. Most governance systems observe prompts, analyze responses, and enforce guardrails at the network or API boundary where applications communicate with external models. The model made sense when enterprise AI usage was concentrated around calls to hosted model providers. If applications primarily interact with AI through network requests, inspecting those requests appears to provide a natural governance control point.

However, the architecture of AI systems is already evolving. AI is increasingly becoming embedded infrastructure inside software systems, not merely an external service accessed through APIs. Developers now integrate model libraries, agent frameworks, and inference engines directly into applications. Self-hosted models are deployed inside containers. AI SDKs appear deep within dependency trees. Model artifacts may be dynamically loaded into memory during runtime.

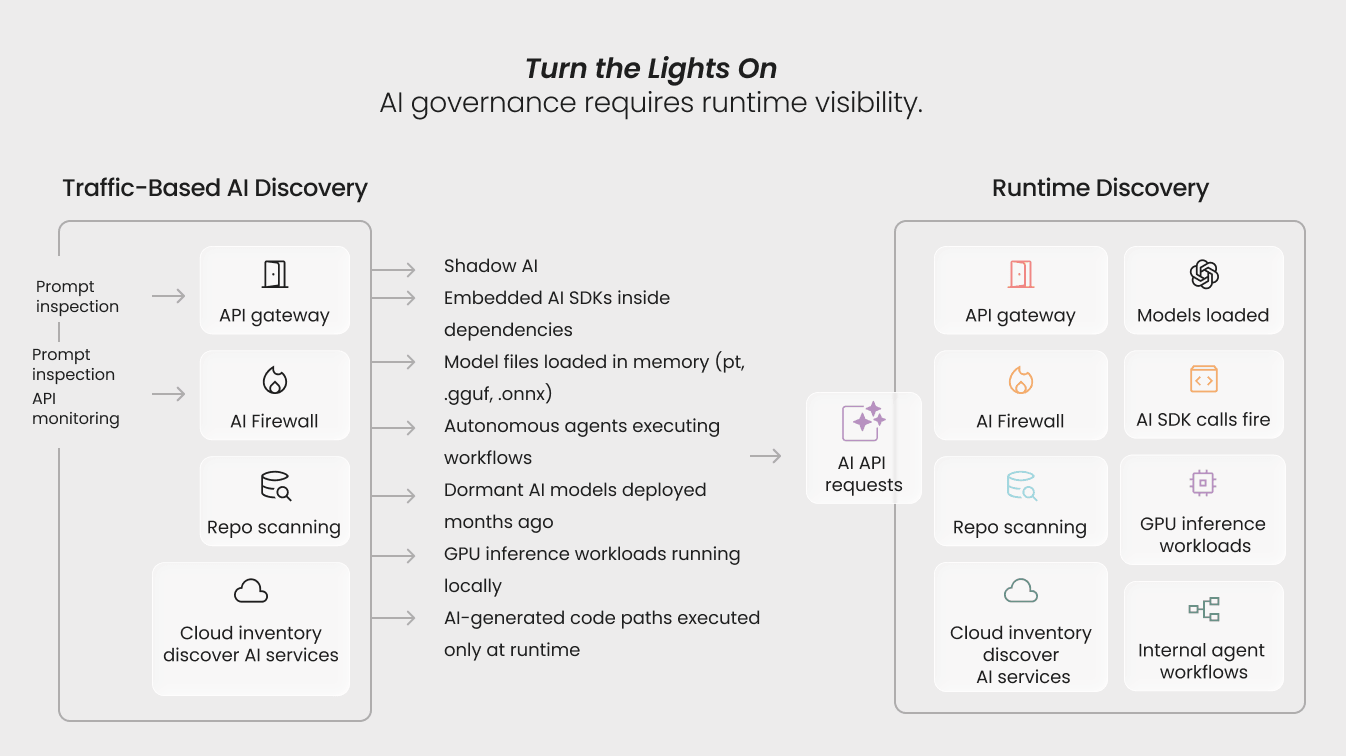

In this environment, inspecting traffic to AI endpoints cannot provide a complete picture of enterprise AI activity. The core governance question is therefore changing. It is no longer simply “What AI traffic do we observe?” It is increasingly “What AI systems are actually executing?”

The Current Standard of Care in AI Governance

Most enterprise AI governance architectures today rely on three discovery mechanisms: repository scanning, infrastructure inventory, and traffic inspection.

Repository and Dependency Scanning

Security teams often begin by scanning repositories to identify AI frameworks, model libraries, and agent dependencies embedded in source code. Static analysis tools can detect references to machine-learning frameworks and generative AI packages during development.

This approach is valuable for identifying potential AI usage early in the software lifecycle. However, static scanning primarily reveals declared dependencies rather than runtime execution.

Large-scale evaluations of AI-generated code continue to demonstrate why development-time scanning alone is insufficient. For example, research analyzing outputs from leading language models found that nearly half of AI-generated code samples fail common security tests, frequently introducing vulnerabilities associated with the OWASP Top 10 (Veracode, 2025). These failures often emerge only when code interacts with real execution environments.

Cloud Infrastructure Inventory

Cloud security platforms provide a second discovery layer by identifying AI services deployed in enterprise infrastructure. Configuration analysis can detect managed machine-learning services, model endpoints, and inference pipelines provisioned in cloud environments.

These inventories are useful for identifying officially deployed AI systems. However, infrastructure discovery primarily reflects what was provisioned, not necessarily what is executing inside application environments at runtime.

Traffic Inspection and AI Gateways

The third—and increasingly prominent—governance layer relies on network-based monitoring through AI gateways and API security platforms. These systems inspect requests flowing to model endpoints and analyze prompts and responses for anomalies.

Traffic inspection has become central to many enterprise AI security strategies. Monitoring AI API interactions helps organizations detect prompt injection attempts, data leakage risks, and abnormal usage patterns.

Yet traffic-based visibility inherently observes communication with AI systems, not the systems themselves.

The Emerging Visibility Gap in AI Security

The limitations of traffic-centric governance models are becoming more apparent as AI capabilities diffuse across enterprise environments.

Shadow AI and Unmanaged Systems

The rapid adoption of AI tools has created a new category of risk: shadow AI, where employees deploy AI capabilities outside official governance frameworks.

Recent industry research highlights the scale of the problem. A global survey of enterprise organizations found that more than 70% of companies report limited visibility into how generative AI tools are used internally, while unmanaged AI usage continues to expand across development and knowledge-work environments (Cyberhaven, 2025).

Shadow AI deployments often bypass the gateways and proxies designed to monitor AI traffic.

Agentic Systems and Autonomous Workflows

Another emerging challenge is the growth of agent-based AI systems. Autonomous agents orchestrate workflows by interacting with APIs, models, and enterprise tools.

In these systems, the observable API request may represent only one step in a much larger chain of execution involving multiple models, tools, and data sources.

From a governance perspective, inspecting a single network request provides only partial visibility into the overall AI workflow.

Embedded AI Components

Developers increasingly embed AI capabilities directly inside applications through SDKs, inference engines, and local model deployments.

These components often execute within application processes rather than interacting with external model providers. As a result, they may never generate traffic that passes through AI gateways or API inspection layers.

The Developer Velocity Problem

AI adoption is also expanding faster than governance mechanisms can adapt. Studies of enterprise developer behavior suggest that AI tools are rapidly becoming integrated into everyday development workflows.

Research analyzing enterprise adoption patterns indicates that AI coding assistants and automated development tools are now used by a majority of development teams, accelerating the pace at which new AI-enabled code enters production environments (GitHub Research, 2025).

This velocity compounds the visibility challenge for security teams.

Traffic Visibility vs. Runtime Visibility

Traffic inspection answers a critical governance question:

What requests are being made to AI systems?

However, modern AI environments require answering a broader one:

What AI components are actually executing inside our environment?

The difference becomes evident in several common scenarios. A development team deploys a self-hosted model inside a containerized application. The model is loaded directly into memory when the service starts. Because the system does not rely on external APIs, no gateway or traffic-inspection system observes the model’s operation.

In another scenario, a developer introduces an AI SDK through a dependency update. Static scanning identifies the presence of the library but cannot determine whether the application actually executes it. Only runtime observation reveals whether the model interface is invoked.

Similarly, model artifacts, such as fine-tuned weights or local inference engines—may be loaded dynamically during application execution. These artifacts may never appear in repository scans or infrastructure inventories.

In each of these cases, governance systems that rely solely on traffic inspection may miss critical AI components operating within the application environment.

Implications for AI Governance and Policy

The visibility gap has implications that extend beyond enterprise security operations.

Compliance and Regulatory Oversight

Emerging governance frameworks increasingly require organizations to maintain accurate inventories of AI systems operating in production environments. Discovery mechanisms based solely on development artifacts or traffic inspection may struggle to provide the operational evidence required by regulators.

Operational Risk Management

Security teams cannot assess the risk posture of systems they cannot observe. Runtime visibility is necessary to understand how AI components interact with data pipelines, infrastructure resources, and business workflows.

Policy Enforcement Across the SDLC

Governance frameworks often define policies governing which models, libraries, or AI services are permitted. However, policies can only be enforced if organizations can verify runtime behavior across the software lifecycle.

Without runtime verification, governance frameworks risk becoming advisory rather than enforceable.

Turning the Lights On

The current generation of AI governance tools represents an important first step in managing generative AI risk. Monitoring prompts, scanning repositories, and inspecting API traffic provide valuable signals about how AI systems are used.

But they cannot provide complete visibility.

As AI becomes embedded in enterprise software systems, governance architectures must evolve to observe the environments where AI components actually execute.

This transition mirrors earlier shifts in cybersecurity. Organizations once relied primarily on perimeter monitoring and network visibility. Over time, defenders recognized that threats increasingly operated within application processes and memory space, requiring deeper forms of runtime visibility.

AI governance is approaching a similar moment.

The central question for the next phase of enterprise AI governance is not simply where AI appears in code or which APIs applications call. It is whether organizations can see the AI systems operating inside their environments.

Answering that question requires turning the lights on.

References

Cyberhaven. (2025). Shadow AI report: Enterprise adoption and security risks. Retrieved from https://cyberhaven.com/resources/reports/2025-shadow-ai-report

GitHub Research. (2025). AI developer productivity report. Retrieved from https://github.blog/news-insights/research/2025-developer-productivity-ai-report

Veracode. (2025). GenAI code security report. Retrieved from https://www.veracode.com/resources/reports/genai-code-security-report-2025

Related blogs

Runtime Observability in the Post‑Claude Code Security Era

The adoption of large language models (LLMs) as coding assistants has accelerated rapidly. GitHub’s 2024 developer survey found that 97% of developers have used AI coding tools, with many organizations now relying heavily on these technologies for rapid prototyping, MVP development, and production releases [1]. This increased reliance on AI-generated code introduces non-trivial security risks.

8

Turn the Lights On: AI Governance Through Runtime Enforcement

Enterprise AI governance is rapidly evolving from discovery to visibility. Organizations have begun identifying where AI exists and, more recently, illuminating how AI behaves at runtime. Nevertheless, true governance demands more than just visibility, it requires enforcement.

5

Turn the Lights On: From AI Discovery to Runtime Illumination

Enterprise AI governance has rapidly converged on discovery mechanisms centered around traffic inspection and external observation. While these approaches provide partial visibility into model usage, they rely on inference rather than direct observation of execution. Recent research (2025 - 2026) demonstrates that critical AI security risks, including prompt injection, agent hijacking and tool-level exploitation, manifest primarily at runtime and are often invisible to boundary-based monitoring. This post argues for a shift from discovery to runtime illumination, a model that treats execution as the primary source of truth for AI governance.

5

A Primer on Runtime Intelligence

See how Kodem's cutting-edge sensor technology revolutionizes application monitoring at the kernel level.

Platform Overview Video

Watch our short platform overview video to see how Kodem discovers real security risks in your code at runtime.

The State of the Application Security Workflow

This report aims to equip readers with actionable insights that can help future-proof their security programs. Kodem, the publisher of this report, purpose built a platform that bridges these gaps by unifying shift-left strategies with runtime monitoring and protection.

.png)

Get real-time insights across the full stack…code, containers, OS, and memory

Watch how Kodem’s runtime security platform detects and blocks attacks before they cause damage. No guesswork. Just precise, automated protection.