Introduction

The adoption of large language models (LLMs) as coding assistants has accelerated rapidly. GitHub’s 2024 developer survey found that 97% of developers have used AI coding tools, with many organizations now relying heavily on these technologies for rapid prototyping, MVP development, and production releases [1]. This increased reliance on AI-generated code introduces non-trivial security risks.

Empirical research documents these risks in detail. Veracode’s 2025 GenAI Code Security Report evaluated more than 100 LLMs across 80 coding tasks and found that only 55% of AI-generated code was secure—meaning nearly half of all AI-generated code introduces known security flaws [2]. Controlled experiments on iterative AI code generation show that security vulnerabilities frequently persist and often increase through multi-round feedback loops with LLMs, with efficiency-focused prompts associated with the most severe outcomes [3]. Large-scale analysis of AI-attributed repositories on GitHub identified 4,241 CWE instances across 77 distinct vulnerability types in a sample of 7,703 files [4]. Enterprise data amplifies the concern: Apiiro’s 2025 research across Fortune 50 companies found that by June 2025, AI-generated code was introducing over 10,000 new security findings per month—a 10× increase from December 2024—with privilege-escalation paths up 322% and architectural design flaws up 153% [5].

Against this backdrop, vendors are introducing tooling such as Claude Code Security and Mythos to scan AI-generated code. These systems use LLMs to identify insecure patterns in repositories and code reviews, bringing sophisticated reasoning to static analysis. However, static scanning alone does not close the security gap. As new LLM features expand code-generation capabilities, vulnerabilities increasingly manifest at runtime, where models shape control flow and interact with external services. This position paper argues that runtime observability is now the most critical capability for securing AI-generated software.

Limitations of Static AI Code Scanning

Inference vs. Execution

Most AI discovery platforms, including Claude Code Security and Mythos, detect AI use by scanning repositories, API calls, or dependency imports. These methods yield an inventory of models and libraries but rely on inference rather than direct observation of execution. They cannot determine whether AI-generated code is actually invoked in production, whether it deviates from the reviewed version, or how it interacts with real data.

The runtime behavior of AI-generated code can differ markedly from design-time expectations. Production-level research at Montycloud found that deviations from expected policies in agentic systems surfaced only during runtime execution rather than in static validation phases, directly motivating the need for runtime assessment frameworks [6]. This gap cannot be addressed by pre-deployment scanning alone.

Vulnerability Accumulation Through Feedback Loops

Static scanners cannot account for the feedback loop inherent in AI-assisted coding. Developers often refine AI-generated code by repeatedly prompting the model, creating a sequence of modifications. Shukla et al.’s controlled experiment with 400 code samples across 40 rounds of generation found that vulnerability counts varied markedly across different prompting strategies and that security vulnerabilities frequently increased through iterative feedback [3]. Static tools may approve an initial version but cannot prevent later prompts from reintroducing unsafe patterns. Notably, among security-focused prompts, only 27% of iterations resulted in net security improvements, and these were concentrated in early iterations [3].

Blind Spots in Supply-Chain Security

Scanning repositories also fails to address supply-chain risk. Schreiber and Tippe’s large-scale GitHub analysis found that Python AI-generated code exhibited vulnerability rates of 16–18%, with significant differences in security density across tools [4]. The CSET Cybersecurity Risks of AI-Generated Code report demonstrated that code generation models often produce insecure code with common and impactful security weaknesses, and cautioned that further empirical testing across a greater range of models and tasks is needed before organizations can trust AI-assisted code without rigorous review [7]. These findings show that static scanning provides at best partial assurance; vulnerabilities often emerge from dynamic interactions with runtime environments, external data, and APIs.

Necessity of Runtime Observability

Dynamic Decision Paths

At runtime, AI-generated code can alter execution paths based on user prompts, model outputs, or external context. This dynamic behavior is opaque to static scanners. Runtime observability instruments the application to record function-level execution, enabling defenders to see which model-driven functions are executed, how often they run, and what data they process. Without such telemetry, security teams are effectively blind once AI-generated code is merged into production.

The industry has recognized this gap. Datadog’s AI Agent Monitoring capability, made generally available in June 2025, maps each agent’s decision path—inputs, tool invocations, calls to other agents, and outputs—specifically to address the complexity of non-deterministic agentic systems that cannot be fully understood through static analysis [8]. The product arose precisely because complex, distributed, and non-deterministic agent systems cannot be adequately understood before they run.

Detecting and Preventing Attacks

Runtime observability also allows detection of exploitation techniques unique to AI-powered systems. Consider three scenarios:

- Prompt injection during inference: Attackers craft input that causes a model to request secrets or exfiltrate data. Research finds that 94.4% of state-of-the-art LLM agents are vulnerable to prompt injection, and real-world exploitation has already occurred: in mid-2025, the EchoLeak vulnerability (CVE-2025-32711) against Microsoft Copilot allowed infected email messages to trigger the agent to exfiltrate sensitive data automatically, without user interaction [9]. Static scans cannot detect these attacks because the malicious behavior emerges from the model’s runtime interaction with the prompt.

- Insecure code execution paths: AI-generated code may include latent debugging constructs that are executed only under certain conditions. Monitoring function calls at runtime reveals when high-risk operations are invoked and allows immediate enforcement of security policies before damage occurs.

- Model drift and hidden model updates: Static inventories become stale quickly. Runtime observability can detect new models being loaded in memory, unknown API endpoints, or shifts in prompt-response behavior, allowing governance teams to prevent unapproved models from running.

The iterative feedback research further supports this view. Shukla et al. conclude that human oversight and runtime validation are necessary to catch vulnerabilities introduced during iterative improvements [3]. Without runtime observability, such vulnerabilities will persist until exploitation.

Complementing Static Analysis

Runtime observability should not replace static scanning but complement it. Static tools like Claude Code Security and Mythos quickly identify known insecure patterns and enforce coding standards at the point of development. Runtime observability extends protection into production, verifying that AI-generated code behaves as expected under real workloads, detecting anomalies, and providing telemetry to investigate incidents. Together, they form a defense-in-depth strategy for AI-assisted development.

Kodem’s Contributions and Forward-Looking Governance

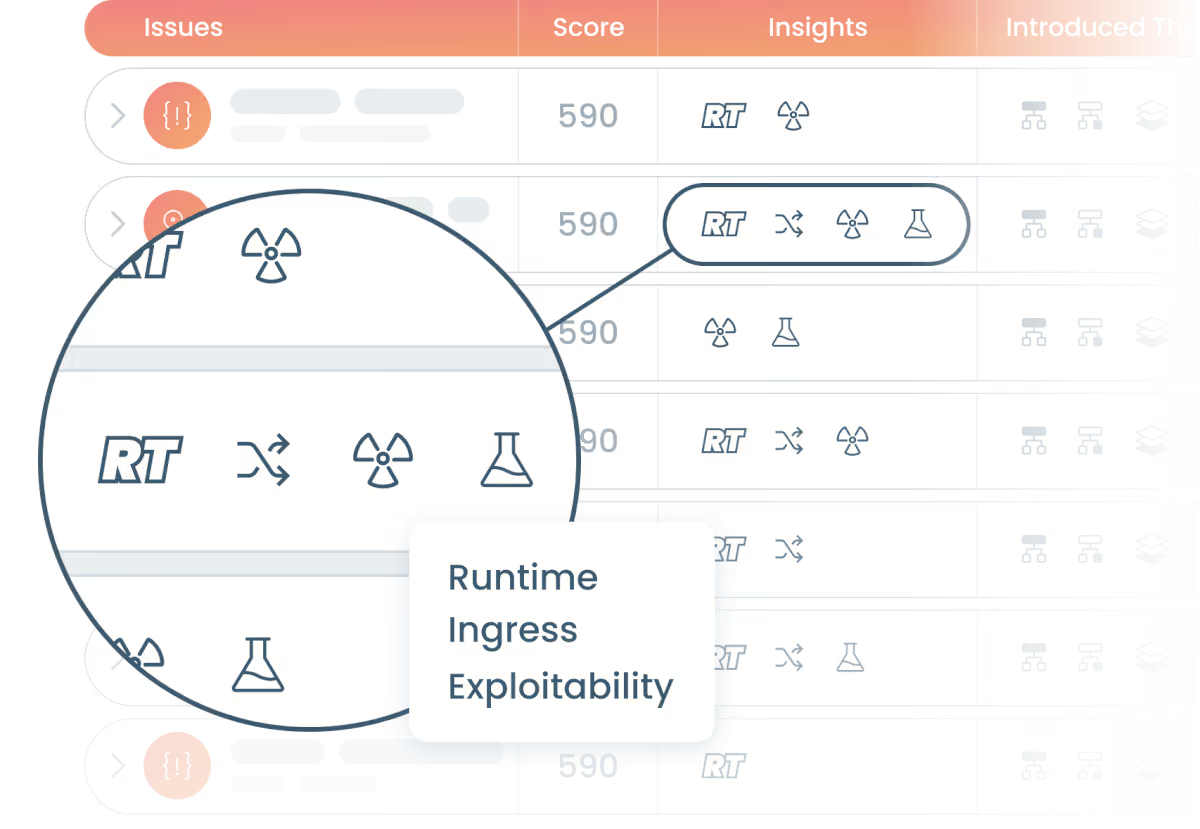

Kodem delivers an integrated platform for AI discovery and runtime illumination. The discovery component scans repository metadata, API endpoints, and package manifests to build an inventory of AI models and dependencies, similar to Claude Code Security and Mythos. The runtime illumination component instruments applications at the function level. When AI-generated functions execute in production, Kodem records their execution paths, the data they access, and downstream dependencies. This visibility allows security teams to:

- Identify which AI-influenced functions actually run in production.

- See how often they execute and under what conditions.

- Detect sensitive data flows and unusual call patterns.

- Prioritize remediation based on actual runtime exposure rather than theoretical risk.

Beyond observability, Kodem is developing forward-looking governance capabilities. These include policy enforcement that blocks AI-generated functions if they invoke prohibited operations (e.g., system commands, network calls to unapproved domains), integration with model registries to ensure only approved models are loaded, and continuous monitoring to detect model drift or unapproved updates. Kodem also plans to leverage runtime insights to automatically generate testing harnesses that reproduce production execution paths, enabling more accurate pre-deployment testing.

Implications for Research and Practice

For AI security researchers, the emergence of Claude Code Security and Mythos underscores industry recognition of the risks associated with AI-generated code. However, these tools are only the first step. Runtime observability addresses the dynamic nature of AI-driven systems and offers rich telemetry for empirical security research. Georgia Tech’s Vibe Security Radar project, launched in May 2025, is already attempting to track CVEs directly attributable to AI coding tools using commit metadata and agent-based root-cause analysis—a methodology that depends on runtime and deployment-time signals rather than static code inspection alone [10]. Researchers can use runtime data to study how vulnerabilities evolve across prompts, how models interact with dependencies, and how attackers exploit prompt injection or supply-chain weaknesses.

AppSec practitioners should integrate runtime observability into their software development life cycles. Static scanning should be treated as necessary but insufficient, requiring runtime verification before and after deploying AI-generated code. Security teams should monitor AI-driven functions for deviations from expected behavior and enforce policies that prevent models from performing sensitive operations without authorization. Governance processes should account for model updates and prompt changes throughout the software life cycle, using runtime data to ensure ongoing compliance with frameworks such as the OWASP Top 10 for LLM Applications and MITRE ATLAS.

Conclusion

The introduction of tools like Claude Code Security and Mythos marks an important milestone in securing AI-generated code, but they primarily address the code before it runs. Research shows that vulnerabilities proliferate during iterative AI interactions [3], that AI-generated code frequently contains high-severity vulnerabilities across enterprise codebases [5], and that agentic systems surface unexpected behaviors only at runtime [6]. By instrumenting applications and observing AI-driven functions in production, runtime observability provides the ground truth needed for effective AI governance. It enables detection of prompt injection, insecure execution paths, and model drift, offering a layer of defense unavailable to static tools. As AI-assisted coding becomes ubiquitous, runtime observability will be indispensable for researchers and practitioners seeking to safeguard the integrity of modern software.

References

[1] GitHub. (2024). Developer survey: AI’s impact on the developer experience. GitHub Blog.

[2] Veracode. (2025). 2025 GenAI Code Security Report.

[5] Apiiro. (2025). 4x velocity, 10x vulnerabilities: AI coding assistants are shipping more risks.

[6] Moshkovich, D., & Zeltyn, S. (2025). Taming uncertainty via automation: Observing, analyzing, and optimizing agentic AI systems. arXiv:2507.11277.

Related blogs

Turn the Lights On: AI Governance Through Runtime Enforcement

Enterprise AI governance is rapidly evolving from discovery to visibility. Organizations have begun identifying where AI exists and, more recently, illuminating how AI behaves at runtime. Nevertheless, true governance demands more than just visibility, it requires enforcement.

5

Turn the Lights On: From AI Discovery to Runtime Illumination

Enterprise AI governance has rapidly converged on discovery mechanisms centered around traffic inspection and external observation. While these approaches provide partial visibility into model usage, they rely on inference rather than direct observation of execution. Recent research (2025 - 2026) demonstrates that critical AI security risks, including prompt injection, agent hijacking and tool-level exploitation, manifest primarily at runtime and are often invisible to boundary-based monitoring. This post argues for a shift from discovery to runtime illumination, a model that treats execution as the primary source of truth for AI governance.

5

Turn the Lights On: Why AI Governance Cannot Rely on Traffic Inspection Alone

Inspecting traffic to AI endpoints cannot provide a complete picture of enterprise AI activity. The core governance question is therefore changing. It is no longer simply “What AI traffic do we observe?” It is increasingly “What AI systems are actually executing?”

5

A Primer on Runtime Intelligence

See how Kodem's cutting-edge sensor technology revolutionizes application monitoring at the kernel level.

Platform Overview Video

Watch our short platform overview video to see how Kodem discovers real security risks in your code at runtime.

The State of the Application Security Workflow

This report aims to equip readers with actionable insights that can help future-proof their security programs. Kodem, the publisher of this report, purpose built a platform that bridges these gaps by unifying shift-left strategies with runtime monitoring and protection.

.avif)

Get real-time insights across the full stack…code, containers, OS, and memory

Watch how Kodem’s runtime security platform detects and blocks attacks before they cause damage. No guesswork. Just precise, automated protection.